The Real Magic of GPT-3

“Any sufficiently advanced technology is indistinguishable from magic.” — Arthur C. Clarke

The most bittersweet part of working in software is that I felt like I’ve lost just a little bit of my sense of wonder about the world.

When my phone navigates turn-by-turn to a remote campsite. When I can access the entire Encyclopedia Britannica with a few clicks. When I order a pizza delivery while in a metal tube at 30,000 feet in the air. It’s all utter magic!

Magic, that is, until I break it down into the individual components. And then it’s all the same: databases, APIs, networks, and fleets of servers.

But every once in awhile, I run across some piece of technology that’s frankly just hard to explain. It’s so wild and new, that I’m not sure how would I even start building it.

I felt that sense of wonder was playing around with GPT-3 via the OpenAI API. The entire time I was interacting with it… I couldn’t help but feel like I was getting a sneak peak at a fundamental shift in technology. I’d feed in input, and what I’d get back was surprise, delight, and even some inspiration.

I still can’t even really tell you how it all works much less why it should work.

Let me share a few examples and thoughts about why this is not just a big deal for AI and NLP… but an even bigger deal for human-computer interaction.

The API

Before I go further, I should quickly give an overview of how the API works. Here’s what it looks like in action…

Breaking down that video… at a high level, the API is as follows…

Input: Some sample text

Output: Predictive next text

You feed it some text… any text.

Could be song lyrics, CSV data, your favorite novel, a text conversation with a friend, whatever. The API then spits out the next few tokens of subsequent text. In the playground above, the output is just appended to the input whenever you hit “submit”.

You can tune a few parameters: you can cause the AI to be more creative in its answers, not to repeat earlier text, and to use particular tokens to start and end. But that’s about it.

There’s no training. No massive data uploads. No complicated set format. It’s all just text in whatever format you’d like.

You can think of it as a neural network that has already been trained on a massive amount of text across the internet. If you supply some starter text, GPT-3 will incorporate what you’ve written and produce some _extremely _credible output.

The fact that I could get up and running in 30 seconds is a big part of the reason that the whole thing seems like pure magic. If I give it a few lines, how could it possibly know what should come next?

Obviously, when given such an exciting new tool, the first thing I wanted to do was play around with it! I started feeding in a few different use cases, trying to get the AI to solve some problems for me.

Test 1: niche recommendations

Almost everyone I know has some set of niche hobbies—and it’s hard to get good recommendations for them. Maybe it’s the best new recipe or running shoes or hydroponics.

For me, the biggest problem is finding new books.

I want something that’s engaging, science-y, and ever-so-slightly outside of the content that I might normally discover.

The typical places I go for book recommendations are Twitter, Audible, GoodReads. But oftentimes the recommendations are a bit hit and miss.

I find they too often focus on the “newest” books that come out. Instead, I’m really looking more for interesting reads from years past that tend to outlast the hype cycle.

So, I fed in a few of recent books from my reading list to the AI to see what it’d recommend.

Here’s the text I presented as input…

The Accidental Superpower - Peter Zeihan

The Misbehavior of Markets - Benoit Mandelbrot

Predictably Irrational - Dan Ariely

The Man Who Solved the Market - Gregory zuckerman

Healing Back Pain - John E. Sarno

Skunk Works - Ben R. Rich

Loonshots - Safi Bahcall

Englightenment Now - Steven Pinker

The Death and Life of Great American Cities - Jane Jacobs

And here’s the next 6 books worth of output…

The Great Disruption - Paul Gilding

Superforecasting - Philip Tetlock

Power, Inc. - David Rothkopf

What's the Matter with White People? - Michael Tesler

New World Order - Benard Lemierre

Poor Economics - Abhijit Banerjee, Joseph E. Stiglitz

From reading quick descriptions, none of these books seem too far out of the realm of possibility. I really enjoyed Superforecasting. The other books are all new to me, but most are rated 4+ stars on Amazon.

Running this a few times, I’ve noticed the AI will make the most errors with authors. It will either mis-attribute real books by real authors (Joan Walsh_ is the author of What’s the Matter with White People, Esther Dulfo is the second author of _Poor Economics), or else invent fake books altogether (New World Order by Benard Lemierre isn’t actually a real book or a real author it seems!).

I’d have to read these books to really understand how good the recommendations are, but that said… it’s close! If nothing else, it’s a starting point.

Besides books, I’m always looking for recommendations on great board games to play with my roommates. So, I fed a few of my favorite board games in as input…

The Settlers of Catan

Scythe

Carcassonne

7 Wonders

Terraforming Mars

…and the API faithfully spit out some new board games for me…

Takenoko

The Castles of Burgundy

Castle Panic

Caylus

Lords of Waterdeep

Codenames

The AI did much better here, these are all real board games! I’ve played Lords of Waterdeep and Codenames, and enjoyed them both immensely.

I wanted to get a more scientific read of how ‘close’ the AI was. Was GPT-3 actually creating good recommendations? Or was it merely detecting that I was inputting board game names, and it was outputting others.

To test, I looked up these new games on BoardGameGeek, the definitive ratings site for, well, board games.

On BoardGameGeek, each game has…

- an overall rating scored from 1-10

- an optimal number of players

- a weight scored from 1-5 (how hard it is to learn, 1 is easily learned, 5 is very complex)

From the data I gave, I told the AI my favorite games scored high overall (7.82 average), were best played by an average of 3.5 people, and were medium weight (2.64 average).

I then took the averages for the results. Here’s how they compare…

| Criteria | Training Set | GPT-3 Recs |

|---|---|---|

| Average Rating | 7.82 | 7.55 |

| Average Optimal Players | 3.5 | 3.75 |

| Average Weight | 2.64 | 2.37 |

Lo and behold, very similar numbers!

Even with the fairly narrow criteria of “board games I like”, GPT-3 acted like a close friend. Without being trained specifically on this set, it just gave me good recommendations.

Better still, these recommendations can seem to reach arbitrary levels of depth. So long as someone has mentioned these words together on the internet, GPT-3 can surface them even more readily than a Google search.

Test 2: curing writer’s block

By now, you have likely seen a lot of different text completion threads on twitter. People feed in a blog post, a set of tweets, or a conversation, and GPT-3 begins to generate a bunch of new lines for it.

For fun, I tried plugging in a draft of one of my earlier posts into the API.

Here’s the text I’d written.

When buying a new product, it’s easy to “judge a book by its cover.” Doing a deep evaluation of a service with feature comparisons, build vs buy scenarios and ROI is really hard! It’s much easier to take a quick mental shortcut and assume the well-designed product must be the superior one…

That’s the reason why I tell most startup founders (especially engineers) to spend time on design and aesthetics. You literally can’t afford not to. There’s so many options out there, you’ll get zero traction if your app looks like garbage. [1]

Upon reflection, I realized that feedback was a bit surface level. How does it account for the Salesforces, Oracles, and Adobes of the world? I wouldn’t consider any of them to be the apex of visual design… yet they continue to grow.

Did these behemoths come to power at a different point in time? Or is there something more subtle at play? What’s going on?

I think there’s a different, but often overlooked explanation: a product’s UX matches its buyer, not necessarily its user.

There are two very distinct sales motions: ones targeted at practitioners, and ones targeting executives. No matter who ends up using your product, you have to satisfy the buyer.

I pasted it in and hit submit. Here’s what the AI produced…

It’s a small nuance, but it explains how a lot of successful companies have flourished.

Let’s explore this difference by looking at three very successful companies: Salesforce, Oracle and Microsoft. [2]

What we are about to embark on is a thorough exploration of the evolution of a feature set. Think of it as a user centered design case study. The twist is that the feature is being driven by two distinct “buyer” segments. I’ll highlight the differences, and then draw some conclusions.

Let’s start by comparing a well-known SaaS cloud CRM: Salesforce with its enterprise force-multiplier predecessor, Oracle Siebel.

Salesforce was founded in 1999 by an accomplished internet entrepreneur Marc Benioff. The idea was simple: what if an application provided salespeople with the information they needed, right when they needed it?

Benioff was the consummate salesman. As he pitched Salesforce, he described the typical CRM user. The message was clear: salespeople are busy, they hate paperwork, and would rather be talking to customers.

It’s one thing to watch text other people have posted. It’s entirely another to see an AI completing your own thoughts right before your eyes; nothing quite prepared me for it.

While the generated text doesn’t exactly follow the rest of the article… it’s not obviously wrong either. The transitions are smooth, and tie back to the original content I’d written. More interestingly, GPT-3 picked up on my convention of using [1] for endnotes, and added its own [2].

As I sat there and watched the autocomplete work, it actually gave me a few new ideas for both the structure and the content of the article!

That’s why I believe one of the killer use cases for GPT-3 is** curing writer’s block. **

My biggest challenge with writing can be simply starting a paragraph. It’s gotten to the point where I’ll start typing some half-formed string of words just to get in the rhythm of figuring out what should come next.

But with GPT-3 there’s another tool at my disposal. If I’m stuck, I can try a few variations of what “should come next” just to get my mind going. Even if they aren’t the right words, it’s enough of a prompt to get myself out of a rut. From there, I can curate, edit, and ideate.

By adding just a few lines, and then waiting for the result to teach me something, I’m effectively getting new ideas from the entire text GPT-3 was trained upon.

The whole experience has opened me up to the idea that the writing tools of tomorrow will be very different than the writing tools of today.

Instead of being rote recorders and spellcheckers, tomorrow’s editors will actually try to guide unstructured thought. Auto-completion, complex queries, and even the fresh idea generation will all be built into the text editors of the future.

It all means that great writers can spend less time writing and more time thinking.

Test 3: playing games

As a last test, I wanted to see what it would take to teach GPT-3 to solve a few different translation problems.

You might have already seen examples of code generation from english descriptions, which are great examples of this. I wanted to see how GPT-3 would handle some weirder translation sets.

Game 1: Analogies

If you’re like me, you probably took a number of standardized tests in high school.

One of the key parts of the SAT used to be analogies (though it’s not any longer due to studies which found these questions were biased). Given two words, and how they relate to one another, you’d have to pick two other words which have a similar relation.

I wanted to see whether GPT-3 would pick up on this peculiar testing format.

Here’s the training set I fed in…

medicine:illness :: law:anarchy

paltry:significance :: banal:originality

extort:obtain :: plagiarize:borrow

fugitive:flee :: braggart:boast

chronological:time :: ordinal:place

And then here’s a few new sets of words I tried, supplying only the inputs…

in: sullen:brood

out: fractious:gripe

in: iconoclast:convention

out: enfant terrible:rules

Not bad! A sullen person will often brood, as a fractious person will often gripe. An iconoclast defies convention, as an enfant terrible defies rules. GPT-3 seems to get it!

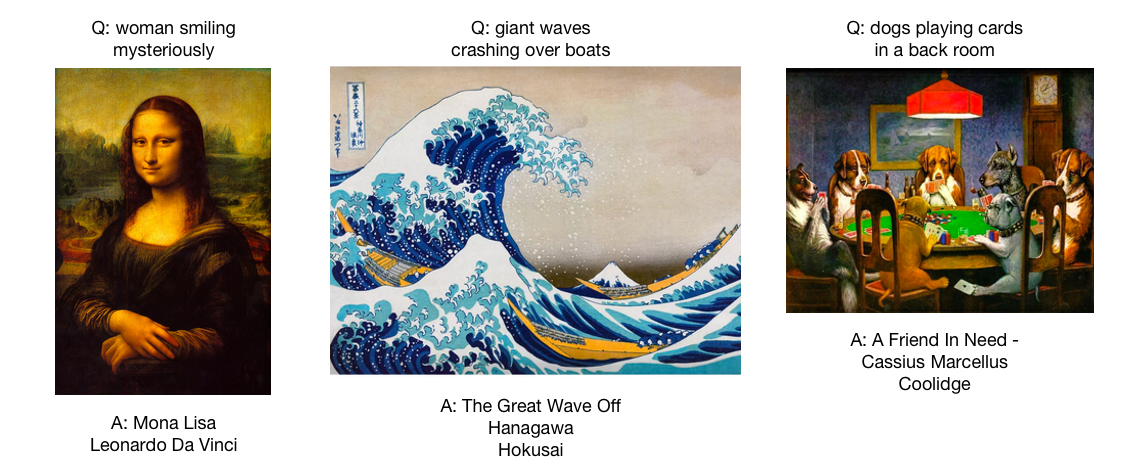

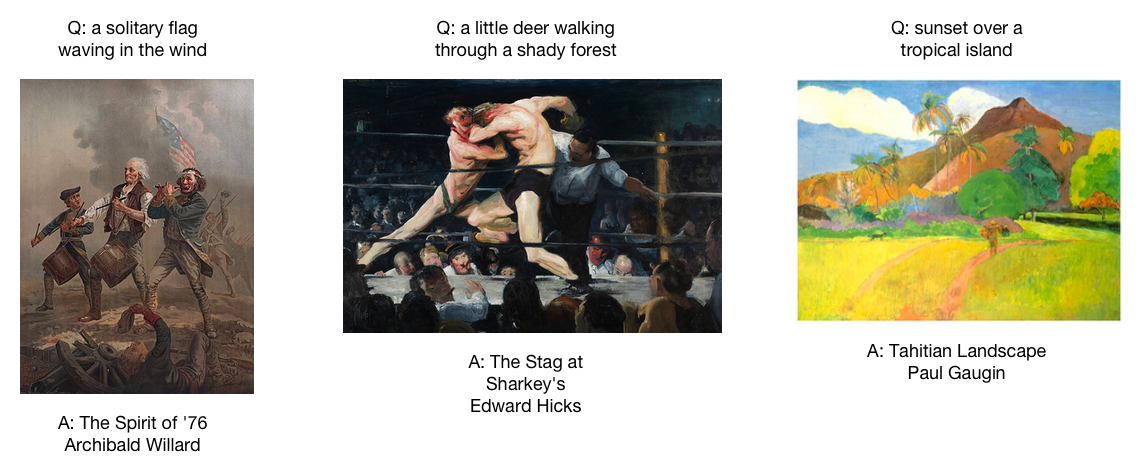

Game 2: Description of famous paintings

For the second game, I tried making things a little bit more difficult.

It’s relatively easy for a person to generate a description from a work of art. Much harder to say “think of a work of art that most resembles a solitary flag waving in the wind”.

Game 2 was trying to get the AI to match famous works of art just based upon a description alone. Here are some examples of training (though again, without images, those are just there for the reader).

Again, I fed in various inputs as training data…

Q: a woman smiling mysteriously

A: Mona Lisa - Leonardo Da Vinci

Q: giant waves crashing over boats

A: The Great Wave Off Kanagawa - Hokusai

Q: american flags lining the alleyway

A: The Avenue in the Rain - Childe Hassam

Q: dogs playing cards in a back room

A: A Friend In Need - Cassius Marcellus Coolidge

Q: people huddled around a dark table eating

A: The Potato Eaters - Vincent van Gogh

Q: a roman aquaduct running above a river

A: The Pont du Gard - Hubert Robert

And then tried feeding various inputs with the AI (the AI is generating the A’s) here.

Q: a solitary flag waving in the wind

A: The Spirit of '76 - Archibald Willard

Q: a little deer walking through a shady forest

A: The Stag at Sharkey's - Edward Hicks

Q: sunset over a tropical island

A: Tahitian Landscape - Paul Gauguin

Here are the paintings along with their queries…

Not bad. I’d give the AI a 2/3 on this one, I’m not quite sure where the answer #2 came from, though it is most definitely a famous painting and piece of art.

The real magic…

There were a few other examples I tried leading up to this post, though they didn’t work nearly as well. GPT-3 certainly isn’t a panacea or full AGI, and many of these examples definitely benefited from tuning and adjustments.

That said, the OpenAI API really made me take a step back and reconsider how simple working with AI could become.

Instead of trying to feed in all sorts of meta-parameters, training, and models… could you build a robust recommendation system just by feeding in a several thousand characters worth of data? I was surprised to learn that the answer is a definitive “yes”.

The system is so simple that literally anyone can provide inputs.

I think that’s where the real magic from the OpenAI API starts to come from. Yes, the model is really good. But even more than that… it’s really accessible.

You don’t need to understand the syntax of a for loop, how to make function calls, or the differences between interpreted and a compiled languages.

The real magic of OpenAI’s API and GPT-3 is that it can match you in the closest thing to human thought:** written text. **

The user can go straight from thoughts to text with minimal worry about how to structure that text for the machine. It’s a completely new paradigm not just for programming, but for thinking!

It’s easy to imagine authors and songwriters sitting at home and plugging a few lines into GPT-3 to generate new chapters or verses. Anyone who can write can teach the AI to play games, write code for you, or just brainstorm fresh ideas.

Getting started is easy… just write down a few examples in plain text, and then hit ‘submit’.

Now that’s real magic.

Thanks to Osama Khan, Kevin Niparko, and Peter Reinhardt for providing feedback on drafts of this post.